Generative AI and the “Plagiarism Trap”: What creators and businesses should know

Generative AI now reproduces not just styles but sometimes expressions that are protected by copyright. We call this the “plagiarism trap”: model outputs overlapping with copyrighted works because those works were in training data. This article breaks down how training-data legality and model memorization can lead to near‑verbatim reproductions, why that matters for creators and businesses, and which practical steps stakeholders should take now. You’ll find clear definitions, an overview of global and Pakistan‑focused legal responses, summaries of landmark lawsuits shaping precedent, and step‑by‑step detection and prevention workflows. We combine legal, technical, and ethical perspectives and offer actionable licensing and governance approaches creators, platforms, and policymakers can use to lower risk. Wherever relevant, we point Pakistani creators to connectivity and workflow tips, showing how dependable mobile data and support make the detection and enforcement tools we discuss usable in practice.

What is the generative AI plagiarism trap — and why it matters

The “generative AI plagiarism trap” describes the risk that text and image models will reproduce copyrighted material they encountered during training, creating practical and legal exposure for creators and those who deploy these systems. Models trained on large, scraped datasets can memorize or stitch together fragments of protected works; certain prompts or overfitting increase the chance of near‑verbatim output. The harm is concrete: rights holders can lose market value when derivatives compete with originals, creators face reputational and commercial risk, and platforms may incur takedowns or litigation costs. That’s why dataset curation, provenance tracking, and transparent licensing are essential mitigations.

Technically, AI reproduces protected content by learning statistical patterns rather than by “copying” in a human sense, but the result can still be close enough to trigger infringement claims. The next section explains how training data is consumed and how model settings—like sampling parameters and prompt detail—can surface memorized material.

How does generative AI produce content that can look like plagiarism?

Generative models compress patterns from large datasets into their weights. When a model stores distinctive sequences, it can reproduce them during inference — a behavior we call memorization. Overfitting to repeated, high‑signal copyrighted passages raises the risk of verbatim reproduction, and aggressive prompting or wide sampling can surface those fragments. Examples include image models recreating specific compositions or text models outputting long passages similar to published works. These outcomes aren’t deliberate copying but they create comparable legal and market problems. Technical mitigations — deduplication, differential privacy, and sampling controls — lower but don’t eliminate the risk, so legal and governance measures must run in parallel.

These technical facts lead directly into the IP questions that shape litigation and policy, which we cover next.

What are the main intellectual‑property concerns around AI‑generated content?

When AI is involved, creators and rights holders face a few central IP issues: who qualifies as the author when a human uses AI; whether an AI output is a derivative of an existing work; and how attribution and licensing should operate. Many jurisdictions still require meaningful human creative input for full copyright protection, so minimally edited AI outputs fall into a gray area. Determining whether an output is a derivative work depends on whether it reproduces protected expression rather than just borrowing style or theme. Finally, attribution, licensing, and compensation become messy when training datasets include copyrighted material without clear opt‑ins or licenses.

These concerns underline the need for clearer legal standards and operational practices for provenance and licensing, which the next section explores.

How does copyright law treat generative AI?

Current AI copyright law balances established authorship doctrines with new policy moves focused on transparency and dataset governance. Jurisdictions differ widely. Many legal systems still place human authorship at the center of copyrightability, while regulators and courts pay increasing attention to how training data is sourced and how platforms respond to infringement claims. The result is a patchwork of rules that affects how creators, platforms, and AI developers assign risk and build safeguards. Below we summarize core positions across key jurisdictions to clarify differences in authorship tests and enforcement approaches.

The comparative table below highlights prevailing stances in major regions and where Pakistan currently sits relative to EU and US approaches.

| Jurisdiction | Authorship stance | Mechanisms / notes |

|---|---|---|

| United States | Human authorship prioritized; recent decisions stress meaningful human input for copyright | Courts and the Copyright Office limit AI‑only claims; litigation over dataset use is setting new precedent |

| European Union | Focus on transparency and data rights | EU policy favors disclosure of training data provenance and may impose rules under digital services/transparency regimes |

| Pakistan | AI‑specific rules are limited; general copyright law applies | Absent explicit AI guidance, stakeholders rely on contracts and takedown procedures — creating practical gaps |

Can AI‑generated content be copyrighted?

Short answer: in most leading jurisdictions, outputs created entirely by machines without significant human creative input are unlikely to qualify for traditional copyright. Works that include meaningful human editing, selection, or arrangement are more likely to meet authorship thresholds. Copyright offices and courts have repeatedly emphasized human contribution as a key test. For creators, the practical takeaway is clear: record and demonstrate substantive human involvement — edits, choices, and curatorial decisions — to strengthen ownership claims and to guide licensing and contract language.

The global regulatory picture is complex and varies across regions; understanding those differences matters for cross‑border use and licensing.

AI regulations and IP protection — a global view

This study examines how different jurisdictions are building rules for artificial intelligence and what that means for intellectual property protection. Using a comparative legal approach, it reviews laws and proposals in the United States, the European Union, Japan, and China to identify gaps in how AI‑generated works are treated. The research finds significant variation across regions, with most current IP regimes continuing to require human authorship or inventorship—leaving many AI‑only outputs outside traditional protection. That gap raises questions about innovation, jobs, and how creators will be compensated going forward.

How do global rules differ from Pakistan’s approach?

Broadly, the US and EU are taking different paths but both are moving toward clearer rules: the US via case law and Copyright Office determinations that emphasize human authorship; the EU via regulatory measures that stress dataset transparency, opt‑outs, and data‑subject protections. Pakistan has largely applied existing copyright laws to AI matters without an AI‑specific code, so creators and platforms navigate uncertainty through contracts and takedown practices. In the short term, Pakistani stakeholders can reduce risk by documenting provenance, using explicit licensing terms, and recording human creative contributions while local policy develops. These practical steps help bridge the regulatory gap until clearer guidance appears.

Pakistan’s legal environment creates particular operational challenges for creators and rights holders that deserve focused attention.

AI’s impact on intellectual property in Pakistan

This research inspects how artificial intelligence is reshaping intellectual property rights under Pakistan’s legal framework. It situates current IP rules alongside AI advances and explores areas where AI increasingly interacts with copyrighted works. The study highlights gaps and suggests the need for updated guidance to address emerging conflicts between AI practices and existing IP protections.

Which lawsuits matter most — and what do they signal?

A string of headline cases has sharpened legal questions about dataset scraping, derivative works, and platform liability. Litigation over image and text datasets has pushed courts to ask whether training on copyrighted works without licenses can be infringing. Several rulings and settlements are already influencing how datasets are built and documented. Practically, companies may need to secure licenses, adopt opt‑out mechanisms, or improve provenance tracking to reduce litigation risk and offer clearer remediation for rights holders.

Below is a short summary of landmark cases and their takeaways.

Key AI copyright cases and implications:

| Case | Central claim | Status / implication |

|---|---|---|

| Getty Images v. Stability AI | Alleged use of Getty’s images in model training without permission | Highlights need for licensed image datasets and may encourage settlements or licensing frameworks |

| Authors’ suits v. major LLM providers | Claims that copyrighted text was used in training without consent | Drives demands for disclosure and dataset audits to reduce legal exposure |

| Visual artist claims against image models | Alleged reproduction of artist compositions in generated images | Raises questions about derivatives and potential remedies for creators |

Which landmark disputes are shaping industry practice?

Several disputes involving large image and text collections have become clear signals about acceptable training practices and remedies. Cases that target well‑known image libraries and text corpora have focused attention on whether large‑scale scraping without licenses is lawful, prompting AI developers to re‑examine dataset provenance and to consider licensing or takedown processes proactively. Outcomes and settlements in these matters are changing model training policies, supplier contracts, and platform moderation — nudging the industry toward more cautious dataset governance. For creators, these cases show that legal remedies exist but often hinge on documented evidence of copying and the legal test applied in the relevant jurisdiction.

That legal momentum feeds directly into practical detection and prevention steps covered next.

How do court rulings affect originality and fair use for AI outputs?

Rulings are narrowing when generated content is treated as original and are refining fair use (or fair dealing) analysis where models trained on copyrighted works are involved. Courts weigh factors like the purpose of use, the nature of the original, and how much and how substantial the portion used is — and they’re paying closer attention to the role of training and output similarity. For developers, this means better dataset hygiene and documentation; for creators, it means preserving evidence that links outputs to training material when needed. Emerging practices include stronger content filtering, dataset opt‑outs, and remuneration schemes for rights holders when commercial services repurpose protected expression.

These changes make it important to combine technical detection with contractual and licensing strategies for stronger protection.

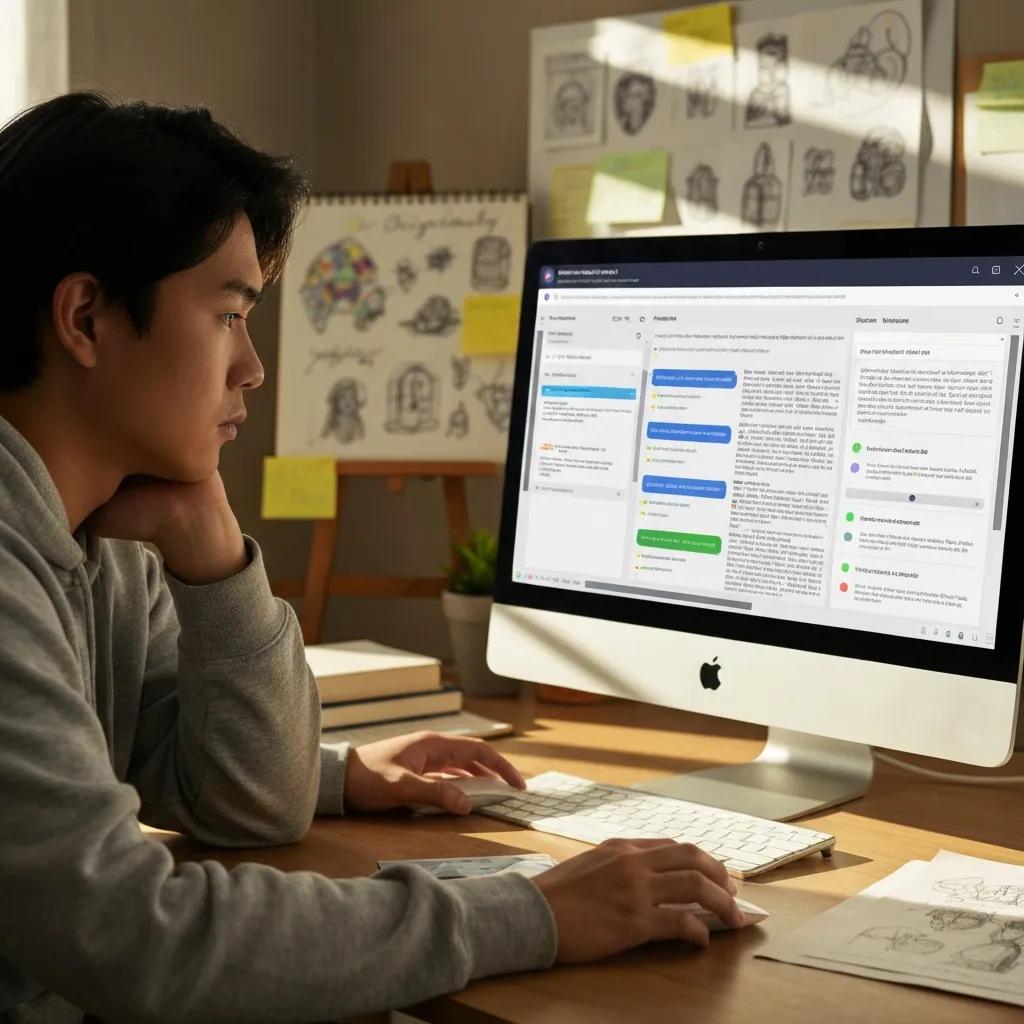

How can creators detect and prevent plagiarism in AI outputs?

Creators need practical detection workflows that pair technical tools with provenance records and human review before publishing or monetizing AI outputs. Detection tools fall into categories like watermark discovery, statistical classifiers that flag model‑like outputs, and provenance tracing that recovers lineage metadata — each with trade‑offs in accuracy and coverage. A practical workflow starts with versioned source control, automated scans against catalogs, human editorial checks, and clear documentation of any human edits applied to AI drafts. This layered approach reduces false positives and strengthens evidence if enforcement becomes necessary.

To help choose the right approach, see the comparison table below that maps tools to media types and operational constraints.

| Tool / approach | Detection method | Pros / cons |

|---|---|---|

| Watermarking (model / output) | Embedded metadata or imperceptible signals | Pros: clear signal when present; Cons: needs adoption by the generator |

| Classifier detectors | Statistical pattern recognition | Pros: scalable for text and images; Cons: false positives, false negatives, and model drift |

| Provenance tracing | Lineage metadata and dataset logs | Pros: strong origin evidence; Cons: requires dataset transparency and standards |

No single tool is foolproof. We recommend a mixed strategy that combines automated detection, human review, and careful recordkeeping to produce defensible originality checks.

For creators with limited bandwidth, reliable connectivity is essential to run cloud‑based detection tools, upload evidence, and work with counsel. We recommend offering clear information on mobile services and packages, easy subscription flows, and responsive customer support so creators can get and keep the connectivity they need. Affordable, stable mobile data and quick support make it practical for Pakistani creators to run scans, submit takedowns, and coordinate with platforms without interruption.

What tools and techniques can help detect AI plagiarism?

Key techniques include output‑level watermark checks, classifier tools that flag likely model outputs, and provenance systems that track dataset lineage. Watermarking is effective when widely used, but many models don’t yet include universal watermarks. Classifiers scale well but can be inaccurate or fooled by adversarial changes, so they work best with human review. Provenance — dataset manifests, training logs, signed metadata — offers the strongest chain of custody but depends on transparency from model builders. Start with automated scans, escalate uncertain cases to human review, and keep every relevant metadata and revision history as evidence.

These steps translate into a simple checklist and disciplined workflow we outline next.

What ethical steps should AI content creators follow to preserve originality?

Follow a short, practical ethical checklist: disclose when AI was used, keep provenance records for inputs and outputs, secure licenses or permissions for third‑party material, and apply meaningful human edits before publishing. Transparency builds trust with audiences and downstream users; provenance records support legal claims about authorship and editing; licensing prevents surprises and supports fair pay; and human review preserves creative judgment. For Pakistani creators, a useful habit is to log prompts, save drafts that show edits, and keep licenses for any assets used in training or composition.

These ethical practices work alongside legal and technical measures and make enforcement workflows more reliable.

How can rights holders protect IP against AI‑driven infringement?

Protecting IP requires a portfolio approach: contractual licenses that explicitly permit or forbid training, robust data governance, technical safeguards, and clear enforcement procedures. Licensing can define permitted AI uses and set fees; data‑use agreements can require provenance and attribution; technical protections like watermarking, fingerprinting, and metadata create detectable signals; and enforcement workflows should specify takedown steps, evidence standards, and escalation to platforms or courts. Combined, these tools give rights holders practical ways to control how their works are used in model training and deployment.

The table below summarizes effective licensing and governance approaches rights holders and businesses can adopt.

| Strategy | Characteristic | Practical benefit |

|---|---|---|

| Explicit training licenses | Contracts that list allowed AI uses | Limits reuse and creates monetization paths |

| Provenance records | Dataset manifests and signed metadata | Strengthens audits and evidentiary claims |

| Opt‑out mechanisms | Publisher/creator registry to exclude works | Reduces accidental exposure during training |

Legal tools plus technical records together offer the strongest protection and give rights holders clearer options to prevent unauthorized AI use.

Share clear information on mobile services and packages, simplify subscriptions, and keep customer support responsive to acquire and retain creators as subscribers. Creators needing higher data throughput for large uploads or cloud scans can choose packages that prioritize stable mobile data. Ufone’s support — helpline 333, email customercare@ufone.com, WhatsApp Self Service, and Ufone Stores — can help with subscriptions and troubleshooting for connectivity and package management. Note: Ufone provides connectivity and support; it is not a legal or detection vendor.

Which licensing and data governance tactics best protect IP?

Good licensing spells out permitted AI activities, requires attribution or compensation for training or commercial use, and includes audit and takedown clauses. Rights holders should push for data‑use licenses that list allowed processing, require provenance metadata, and allow audits. Contracts can mandate removal of infringing outputs, set notice‑and‑takedown procedures, and define remuneration for commercial exploitation. Practical first steps: catalog your works, publish clear licensing terms, and keep dataset manifests that record origins and permitted uses.

How do watermarking and related technologies protect creators?

Digital watermarking embeds detectable markers in assets or outputs to signal origin or permitted use; fingerprinting identifies characteristic features to match outputs with source libraries; and provenance metadata records lineage for verification. Watermarking is most useful when model providers adopt it broadly, while fingerprinting helps detect matches even without cooperation. Limitations include inconsistent adoption, vulnerability to transformations, and the need for cross‑platform standards. When combined with contracts, persistent metadata, and monitoring, these technologies deliver the strongest protection.

These technologies are evolving quickly and work best alongside licensing and governance measures.

What’s next for generative AI and IP rights?

Expect growing regulatory focus on transparency, stronger dataset disclosure requirements, and more litigation that tests the line between training and infringement. At the same time, detection and provenance tools will improve, making enforcement more practical. Policymakers are considering measures like mandatory dataset manifests, opt‑out registries for creators, and clearer liability rules for model builders. Industry responses will likely include cleaner dataset curation, licensing marketplaces, and technical standards for watermarking and provenance. For creators and businesses, the lesson is to move from ad‑hoc defenses to integrated IP strategies that combine legal, technical, and operational safeguards.

How are laws and regulations adapting to AI challenges?

Regulatory trends favor disclosure obligations, dataset provenance, and liability frameworks for model builders whose systems cause harm or infringe rights. Regional initiatives are pushing provenance requirements and stronger notice‑and‑takedown procedures, while courts keep refining authorship and fair use tests. Stakeholders should track proposals, prepare to meet disclosure rules, and push for practical opt‑out and licensing solutions that protect creators without stifling innovation. Documenting datasets and licensing now will make compliance easier as rules tighten.

Staying informed and building governance capabilities today reduces friction tomorrow.

What are the wider ethical and social effects of AI on creativity and IP?

Generative AI can expand access to creative tools while also threatening creator incomes through market dilution and unauthorized derivatives. That tension raises ethical issues about fair pay, cultural appropriation, and creative authenticity. Society may see shifts in how creative work is valued, potential erosion of niche markets, and new collaborative possibilities between humans and AI systems. Policy and industry solutions — licensing marketplaces, provenance standards, and targeted support for affected creators — can reduce harms while keeping the benefits of wider access. Collective action by platforms, rights holders, and policymakers will shape whether AI augments creativity or accelerates displacement.

For creators and organizations ready to act, reliable connectivity and accessible support services are practical necessities. Share clear mobile offers, streamline subscriptions, and keep support channels ready to help creators maintain the data they need. If you need stable mobile data or help managing subscriptions for cloud detection and enforcement workflows, Ufone (Pakistan Telecommunication Company Limited – PTCL) provides 4G and 3G SIMs, prepaid and postpaid packages, internet bundles, devices, and value‑added services to support those needs. Ufone’s customer support options include helpline 333, email customercare@ufone.com, WhatsApp Self Service, and Ufone Stores for subscription help and troubleshooting.

This article lays out legal, technical, and ethical steps creators and businesses can take now to navigate the generative AI plagiarism trap and to strengthen IP protections as the landscape evolves.

Frequently asked questions

What steps can creators take to make sure AI‑generated content is original?

Use a multi‑layered approach: keep provenance records, apply meaningful human edits, and run plagiarism and classifier checks. Log prompts and drafts to document the creative process. Consider watermarking and classifier tools to verify outputs. Combining technical checks with clear ethical practices gives you stronger protection.

How can stakeholders in Pakistan handle the current AI and copyright landscape?

In Pakistan, adopt interim measures: assert provenance, use explicit licensing, and document human contributions to AI outputs. Clear contracts that define rights and responsibilities help manage risk under existing copyright rules. Stay informed about global developments and engage in local policy discussions to help shape future guidance.

What do high‑profile lawsuits mean for AI developers and creators?

Major lawsuits underline risks tied to dataset scraping and copyright claims. They push developers toward cleaner dataset curation and better rights management. For creators, these cases show the value of careful documentation, licensing, and proactive enforcement to protect their work.

How important is human creativity for copyright in AI works?

Human input matters a lot. Most legal systems require meaningful human creative contribution for a work to receive copyright protection. Editing, selecting, or arranging AI outputs strengthens authorship claims and lowers the chance an output is treated as uncopyrightable.

How can creators use digital watermarking effectively?

Watermarking helps by embedding identifiers in content or outputs, making ownership easier to prove. It works best when widely adopted by platforms and tools. Creators should combine watermarks with original records of their work to build a strong case if disputes arise.

What ethical concerns come with AI‑generated content?

Ethical concerns include authorship clarity, cultural appropriation, and fair compensation. AI can replicate styles and dilute markets for original creators. Addressing these issues requires transparent practices, proper attribution, and respect for creators’ rights.

What should creators and businesses expect next in AI and copyright law?

Expect more regulation around transparency and dataset governance, clearer liability rules, and continued litigation shaping standards. Prepare by documenting datasets, using ethical licensing, and working with policymakers and industry groups to find practical solutions.

Conclusion

The generative AI plagiarism trap is a real challenge for creators and businesses. By combining detection, clear licensing, provenance practices, and meaningful human input, stakeholders can lower legal and commercial risk while still benefiting from AI tools. Keep informed, document your workflows, and use reliable connectivity and support to make prevention and enforcement practical. Explore the resources in this guide and reach out for the connectivity support you need to protect your creative work.